"If you define, store, and reuse the recurring reasoning steps that come up when solving complex problems as 'behaviors,' you can spend fewer tokens while improving accuracy." That is the core idea of a paper from Meta.

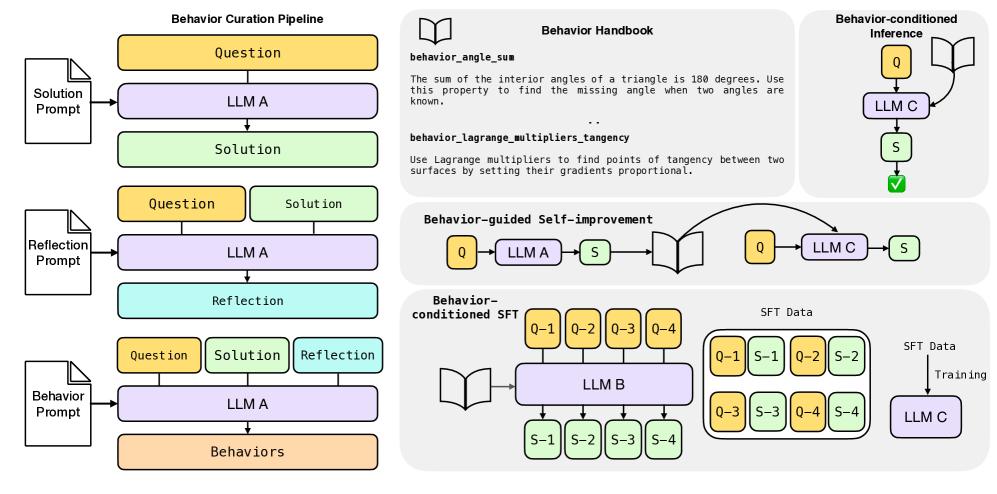

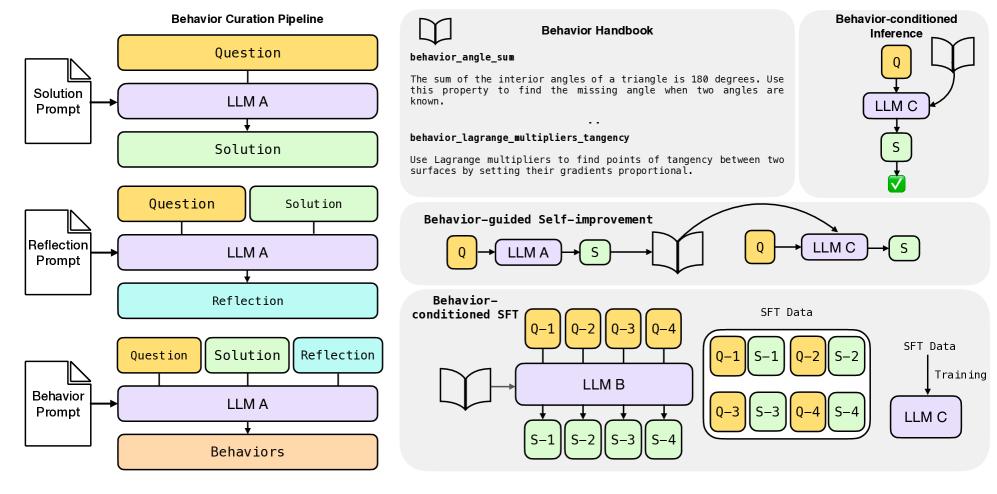

After solving a problem, the LLM reflects on its own reasoning process and extracts generalizable steps likely to be reused. It stores them in a behavior handbook as "behavior name + short description." Later, when those behaviors are reused, the LLM can act quickly and concisely instead of going through a long chain-of-thought style derivation. The key is not memorizing answers, but storing how to think as behaviors.

The paper does not go into this, but if this approach works well, companies should be able to look at the LLM conversation logs employees used for problem-solving and automatically extract reusable Tools, Workflows, and Agents from them.

If a fixed input reliably produces a fixed output, like a function, DB query, or API, it is a Tool. If it follows a fixed series of steps, it is a Workflow. If it loops until it works, it is an Agent.

https://harvest.pub/shared/3d5c3645-7199-43ff-9c2b-8fdc55a87c61