Introduction: The Allure and Reality of Agent Development

This article is written by Hugo, an LLM systems expert who has coached numerous teams, sharing his experience on the limitations of AI agent development and more effective ways to use LLMs. Having collaborated with engineers from Netflix, Meta, the U.S. Air Force, and more, he unpacks common mistakes made when designing LLM-based systems and presents alternatives with real-world examples.

"Everyone starts with agents. They build memory systems, add routing logic, define tools and personas. It looks impressive. It feels like real progress.

Then everything breaks. And when things go wrong (they always do), nobody knows why."

Failure Cases and Lessons from Agent Systems

Six months ago, Hugo built a "research crew" using the CrewAI framework.

- 3 agents and 5 tools in a perfectly coordinated structure.

- But in practice, the following problems occurred:

"The researcher agent ignored the web scraper 70% of the time, the summarizer agent completely forgot the citation tool when processing long documents.

The coordinator just gave up when tasks weren't clearly defined.

It was a great plan, but it fell apart spectacularly in reality."

Through these experiences, Hugo realized that the cases where agents are truly needed are extremely rare. As the flowchart below shows, agents are needed in only a very small portion of overall workflows.

What Is an Agent? And Why Is It Problematic?

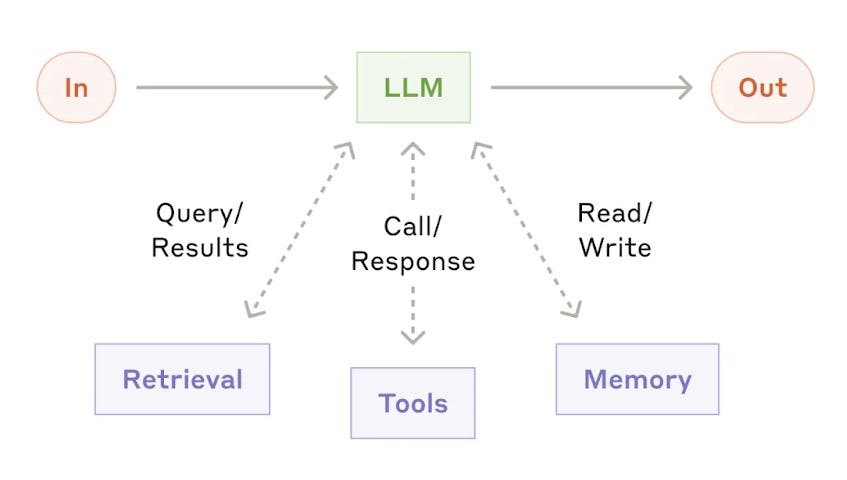

An agent is when an LLM has all four of these characteristics:

- Memory: The LLM remembers past interactions

- Retrieval: Context is added via RAG (Retrieval-Augmented Generation), etc.

- Tool use: Access to functions or APIs

- Workflow control: The LLM's output determines which tools to use and when -- This last step is what defines an agent.

"Most people jump straight to letting the LLM control the workflow.

But in reality, simpler patterns work much better in many cases."

Because agents give the LLM too much freedom:

- Unless the workflow truly cannot be predefined due to highly dynamic situations,

- They only increase complexity and instability.

Most Problems Can Be Solved with Simpler LLM Workflows

Hugo found a recurring pattern across many teams:

- Multiple tasks need to be automated.

- Agents seem like the answer.

- A complex system with roles and memory is built.

- Coordination turns out to be much harder than expected and everything breaks.

- They realize a simpler pattern would have been better.

Key takeaway: "Unless you truly need memory, delegation, and planning, start with simpler workflows like chaining or routing."

Five LLM Workflow Patterns (No Agents Needed!)

1. Chaining

- Example: Writing personalized emails from LinkedIn profiles

- Steps:

- Extract structured data from profile text

- Add context like company information

- Generate personalized email

"Use this when tasks flow sequentially.

If one step fails, the whole chain can break, but it's easy to debug and the flow is predictable."

2. Parallelization

- Example: Extracting data from multiple profiles

- Steps:

- Extract key fields from each profile in parallel (experience, skills, education, etc.)

- Run all tasks simultaneously and collect results

"Useful for processing independent tasks quickly.

Watch out for race conditions and timeouts."

3. Routing

- Example: Classifying inputs and routing them to different workflows

- Steps:

- Classify the input (e.g., executive, recruiter, other)

- Process with the appropriate handler for each classification

"Use this when different inputs need different processing.

Make sure to have a default path for edge cases."

4. Orchestrator-Worker Pattern

- Example: Delegating email generation to specialized workers based on industry

- Steps:

- LLM classifies the industry (IT/non-IT, etc.)

- Delegates work to the appropriate worker based on classification

"The orchestrator controls the overall flow, and workers handle the detailed tasks.

Keep the orchestrator's logic simple and clear."

5. Evaluation Loop

- Example: Evaluating email quality and iterating if it falls below standards

- Steps:

- Generate email

- Evaluator scores it

- If below threshold, incorporate feedback and regenerate (with a max iteration limit)

"Use this when output quality matters more than speed.

Set clear exit conditions to prevent infinite loops."

Key takeaway: "In most cases, you don't need an agent -- you need a better workflow structure."

When Do Agents Truly Shine?

Agents are useful in unstable workflows where humans can intervene to correct mistakes.

- For example, when an agent writes SQL queries, creates visualizations, and suggests analyses, while a human evaluates results and fixes logical errors.

"When an agent's creativity outperforms a rigid workflow,

and when a human can evaluate and correct results in the middle,

that's when agents show their real value."

Additionally:

- For creative tasks like headline brainstorming, copy editing, and structural suggestions, agents are effective when humans judge quality and provide direction.

When Agents Are NOT a Good Fit

-

Enterprise automation: When stable, reliable software is needed, don't let LLMs make arbitrary decisions about critical workflows. Use patterns like the orchestrator pattern with clear control structures.

-

High-stakes decisions (finance, healthcare, law, etc.): In domains requiring deterministic logic, don't rely on LLM guesswork.

Root Causes of Agent System Failures and Improvements

Key failure points and lessons from Hugo's CrewAI case:

-

Agent made wrong context assumptions

- Summary agent forgot citations in long documents

- Fix: Implement explicit memory systems, not just role prompts

-

Tool selection failures

- Research agent used general search instead of the web scraper

- Fix: Limit choices with a clear tool menu

-

Coordination failures

- Coordinator gave up when task scope was unclear

- Fix: Build clear handoff protocols instead of free-form delegation

Key takeaway: "If you build agents, treat them like full software systems.

Make sure you have observability."

Conclusion: Agents Are Overrated!

Simpler, Clearer Workflows Are the Answer

- Agents are overrated and overused

- In most cases, simpler patterns are more effective

- Agents shine when there's human involvement

- Don't use agents for stable enterprise systems

- Ensure observability and clear control structures

"In real production environments, simple patterns and direct API calls work far better than complex agent frameworks."

Want to Learn More?

- A course covering the full LLM software development process is also available. (Enter code PAUL for $100 off)

- A free 10-part email series is also offered for learning practical strategies step by step.

Summary Keywords

Agent, LLM Workflow, Chaining, Parallelization, Routing, Orchestrator-Worker, Evaluation Loop, Observability, Human-in-the-loop, Complexity, Stability, Real-world examples

Remember: rather than obsessing over AI agent development, designing simpler, clearer LLM workflows is actually more effective in practice.