Google Cloud has announced the public preview of Memory Bank, a new managed service for the Vertex AI Agent Engine. Memory Bank provides the core "long-term memory" capability that enables AI agents to maintain natural, personalized conversations. This article covers the existing memory problem, the specific workings of Memory Bank, how to use it, and developer resources—all organized chronologically.

1. The Critical Limitation of AI Agents: 'Amnesia'

As AI agents become more prevalent, developers are working hard to build smarter, more personalized agents. However, in practice, they keep hitting a fundamental limitation: "the absence of memory." Agents treat every conversation as new, often asking repetitive questions and failing to remember user preferences or past information.

"Agents treat each interaction as if it's the first time. They repeatedly ask the same questions and can't remember the user's preferences, making personalized support difficult."

Agents without contextual awareness can't personalize conversations, causing frustration for both users and developers alike.

2. Previous Workarounds and Their Limitations

To solve this memory problem, the conventional approach has been to leverage the LLM's "context window." In other words, all previous conversation history was fed to the LLM along with the new query.

But the downsides of this approach are clear:

-

Increased cost and slower responses Stuffing everything into the context window dramatically increases computation, raising inference costs and slowing processing speed.

-

Quality degradation When irrelevant information accumulates in the context window, model response accuracy drops. Problems like "lost in the middle" and "context rot" emerge.

"Stuffing the entire conversation into the context window is expensive and inefficient. As input grows, response quality actually deteriorates."

3. Planting 'Real Memory' with Vertex AI Memory Bank

Now Google has announced the public preview of 'Memory Bank' to overcome these limitations. This service adds natural, persistent conversational ability—real memory—to the engine.

The four innovations Memory Bank brings are:

- Per-user personalization It can make recommendations tailored to individuals over time, based on user preferences, past choices, and key events.

- Conversation continuity Even over time and across different sessions, conversations can continue seamlessly without interruption.

- Better context delivery By understanding user background information, it supports deeper and more valuable conversations.

- Improved experience Users can communicate naturally and efficiently with the agent without having to repeat themselves.

"Just tell the agent once something like 'My preferred temperature is 71 degrees, and I like aisle seats on flights,' and the agent remembers it going forward!"

Memory Bank is integrated with Agent Development Kit (ADK) and Agent Engine Sessions. Conversation history can now be stored and managed per individual session, with Memory Bank additionally managing long-term memory. It can also be used with other frameworks such as LangGraph and CrewAI.

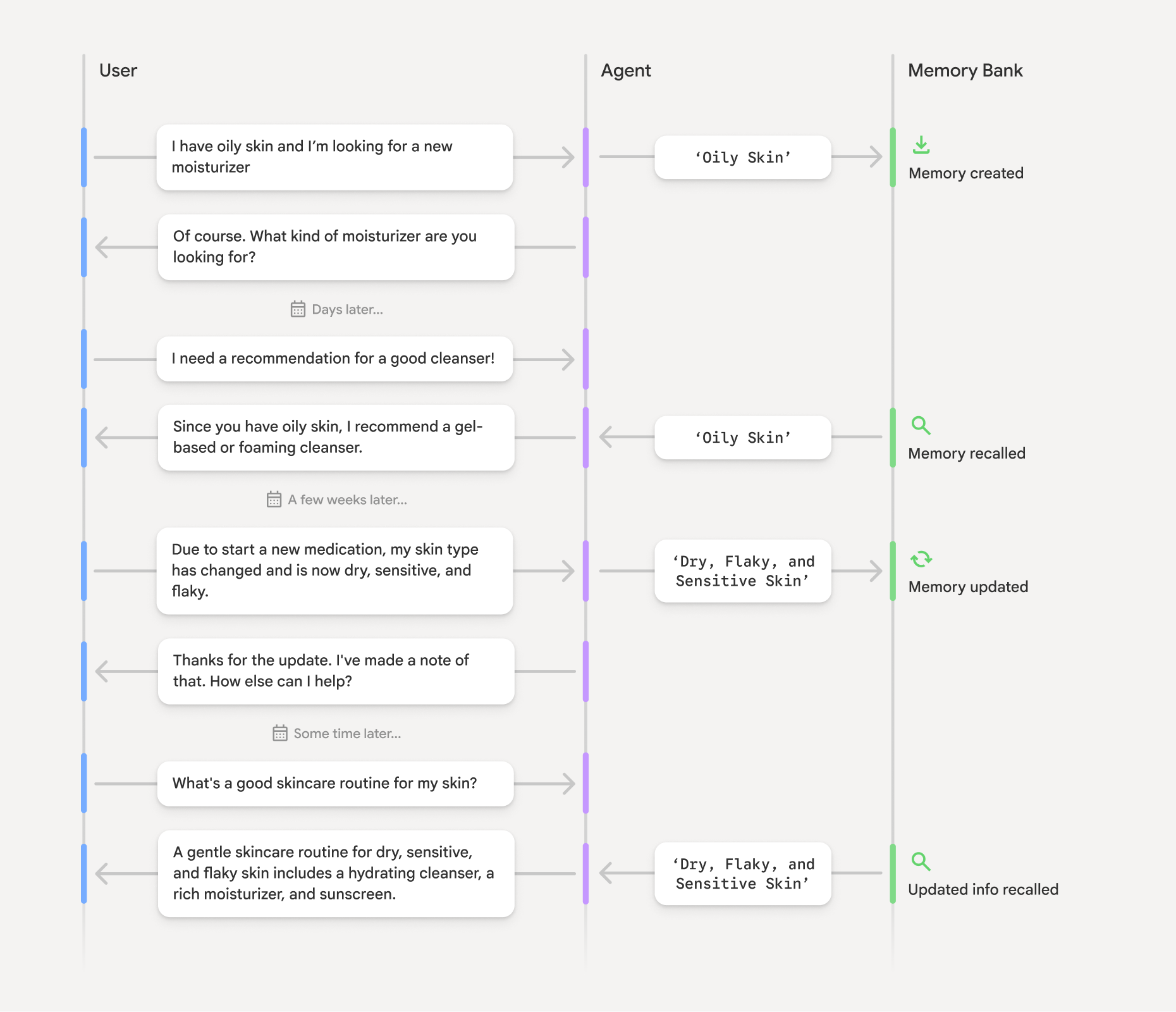

4. How Memory Bank Works: How Does It Manage Memories?

Memory Bank operates in three stages:

-

Extracting memories from conversations The conversation history between agent and user (Agent Engine Sessions) is analyzed by the Gemini model, which automatically distills important facts, preferences, and contextual information into "new memories." This process runs asynchronously and automatically without requiring a separate complex pipeline.

-

Intelligent storage and updates Extracted key information (e.g., "my skin type is combination," "recently purchased products") is structured and stored by user ID. When new information arrives, it is merged and updated with existing memories, automatically resolving contradictions.

-

Context-aware memory retrieval When a user starts a new conversation, the agent can instantly retrieve relevant memories. If needed, it goes beyond simple lookups to use embedding search, "pulling only the memories that fit the current situation" for use in responses.

This entire process is based on Google's latest research (accepted at ACL 2025), designed so that agents can intelligently learn and retrieve topic-based memories.

"The agent remembers from past conversations that the user's skin type has changed, and recommends skincare accordingly. That's the power of long-term memory!"

5. How Developers Can Get Started with Memory Bank

There are two ways to integrate Memory Bank into your agent:

-

Build agents with Google ADK The easiest way to integrate Memory Bank.

-

Build agents using Memory Bank API calls Easily integrate via API from other frameworks (LangGraph, CrewAI, etc.).

Check the official user guide and developer blog for detailed examples and integration methods. Below are ready-to-use development example notebooks:

For developers using ADK but new to Google Cloud, easy sign-up is available via express mode:

- Request an API key with a Gmail account

- Use the key to access Agent Engine Sessions and Memory Bank

- Develop and test within the free usage tier

- Scale to a full Google Cloud project for production

To learn more, join the Vertex AI Google Cloud Community to share questions and experiences.

Conclusion

Vertex AI's Memory Bank practically solves the "long-term memory" problem—the last missing puzzle piece in AI agent development. The era has arrived where agents can truly remember individuals and deliver natural experiences with conversational continuity and context.

"Try conversing with an AI that remembers your preferences and history—no more repetitive explanations and frustration!"

If you're curious, try it out yourself with the official guides and samples