Brian's Continuing Story: Taeho's Insight #28

What does it mean to delegate work well? It means entrusting what you or your company wants to a team and achieving results faster and bigger than doing it alone. When recognized for good work and promoted to team lead, you start with small delegations and gradually increase scope. Only when you can delegate everything without issues can you aim for the next promotion.

The secret to delegation is trust. You can only assign as much as you trust, and you can only wait as long as you trust. It's hard to give a lot to a new team member right away. Start with small tasks, learn each other's styles, assign work based on strengths, and boldly micro-manage through bottlenecks. Through this process, trust builds and shared context grows, enabling you to delegate bigger tasks and wait longer for the desired results.

I believe that prompt engineering, context engineering, harness engineering, Ralph-loop, and all the methodologies, skills, and frameworks for working with AI agents are fundamentally processes of building trust. It's the journey of developing confidence that when you assign a task of a certain size and wait a certain amount of time, the solution will come back.

Small, easy tasks can be handled with prompts, like verbal instructions. Tasks requiring context need onboarding documents or meeting notes shared, just as you would with a new colleague. Project-level work requires harness engineering — leveraging company-level tools, assets, and processes. For research or finding answers, you need iteration cycles of evaluation and improvement like Ralph, pushing until you get the desired results.

Like everyone, I handle most of my work and personal tasks through AI agents. But now, tasks that AI handles easily simply disappear from my attention. Solved problems are no longer problems — they're things you can just assign and stop thinking about. While many things will be solved as AI models and tools improve over time, my work these days is entirely about helping AI solve the things that need to be done now but that AI can't do well alone.

But I can't keep helping forever if I want compound engineering gains. I need to progressively assist less while delegating bigger tasks, waiting longer, and still meeting desired outcomes. This is where I've been using "trust" as a value function in reinforcement learning, with good results.

When I assign work to AI and sense the result won't match expectations, there's one thing I do: intervene. Whether mid-task or restarting from scratch, I intervene like micro-management — directing this way and that, judging right and wrong. Higher trust means less intervention. Therefore, the frequency and intensity of intervention serve as excellent indicators of trust level.

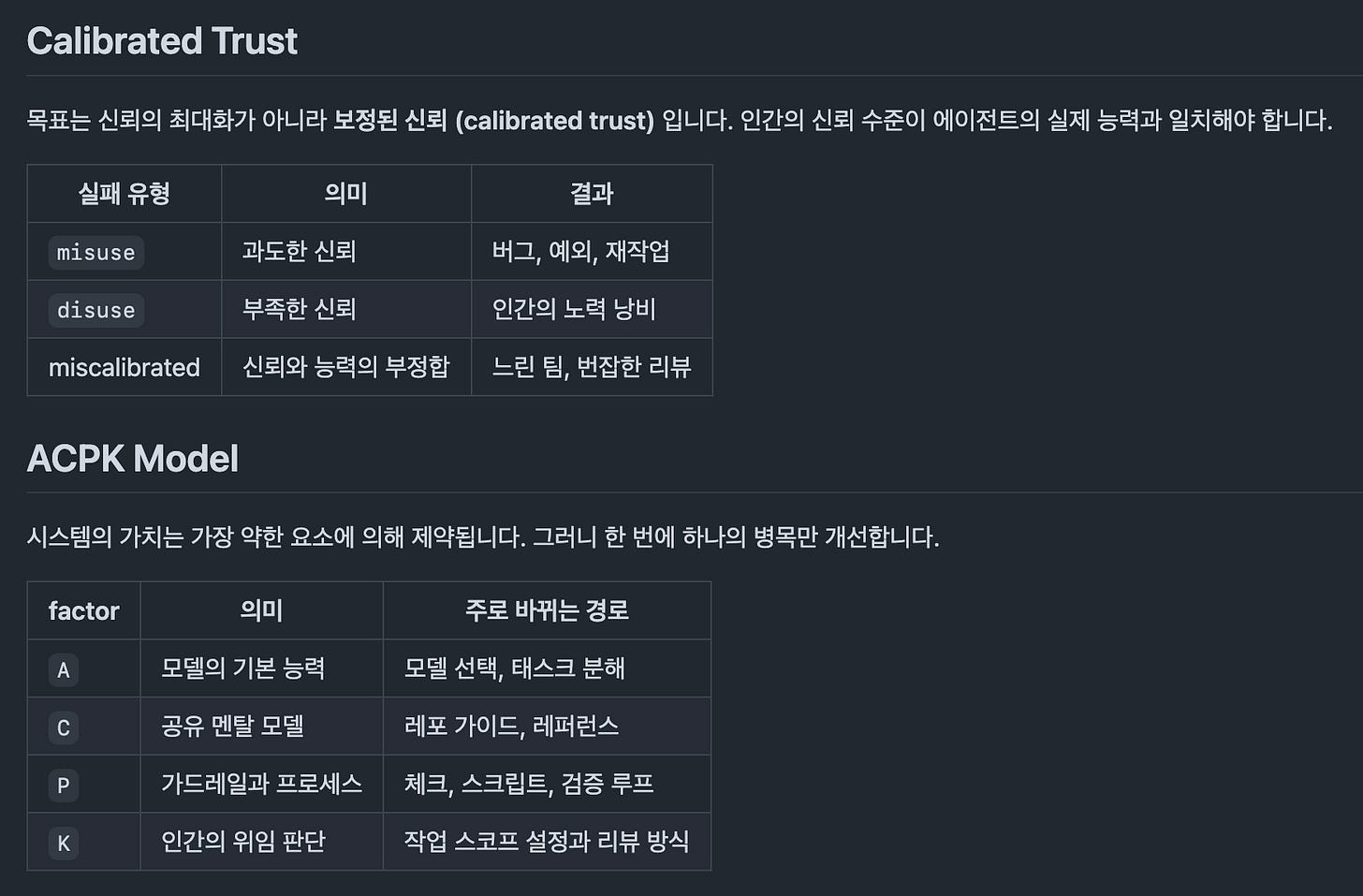

While traditional organizational trust research viewed trust as something built through trial and error, recent research focuses on optimizing for calibrated trust rather than maximum trust. Rather than trusting equally for all tasks, it's better to avoid both over-trusting and over-intervening based on the specific work. This emphasizes organizational work observability, and session data from working with AI agents is the best data from this perspective.

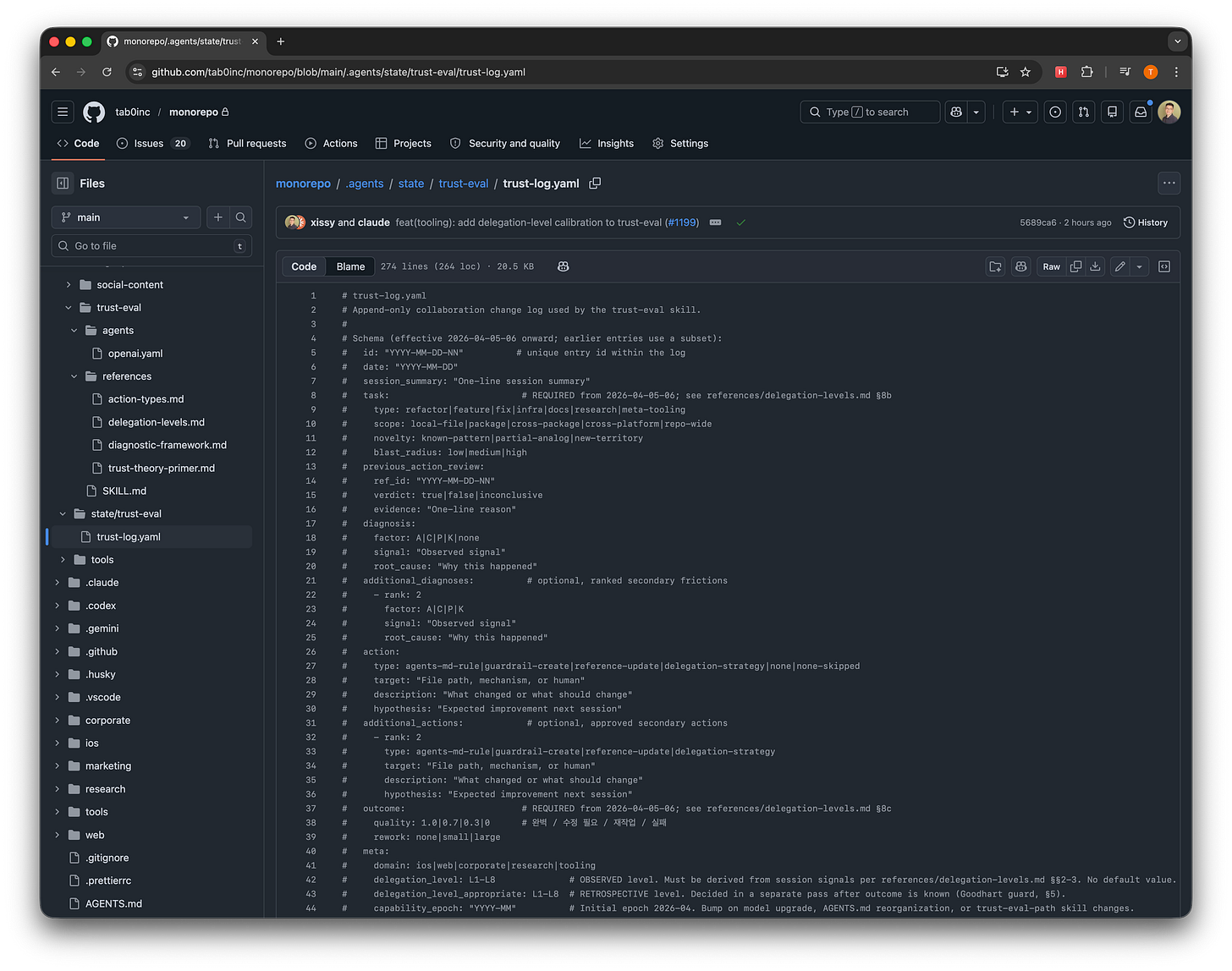

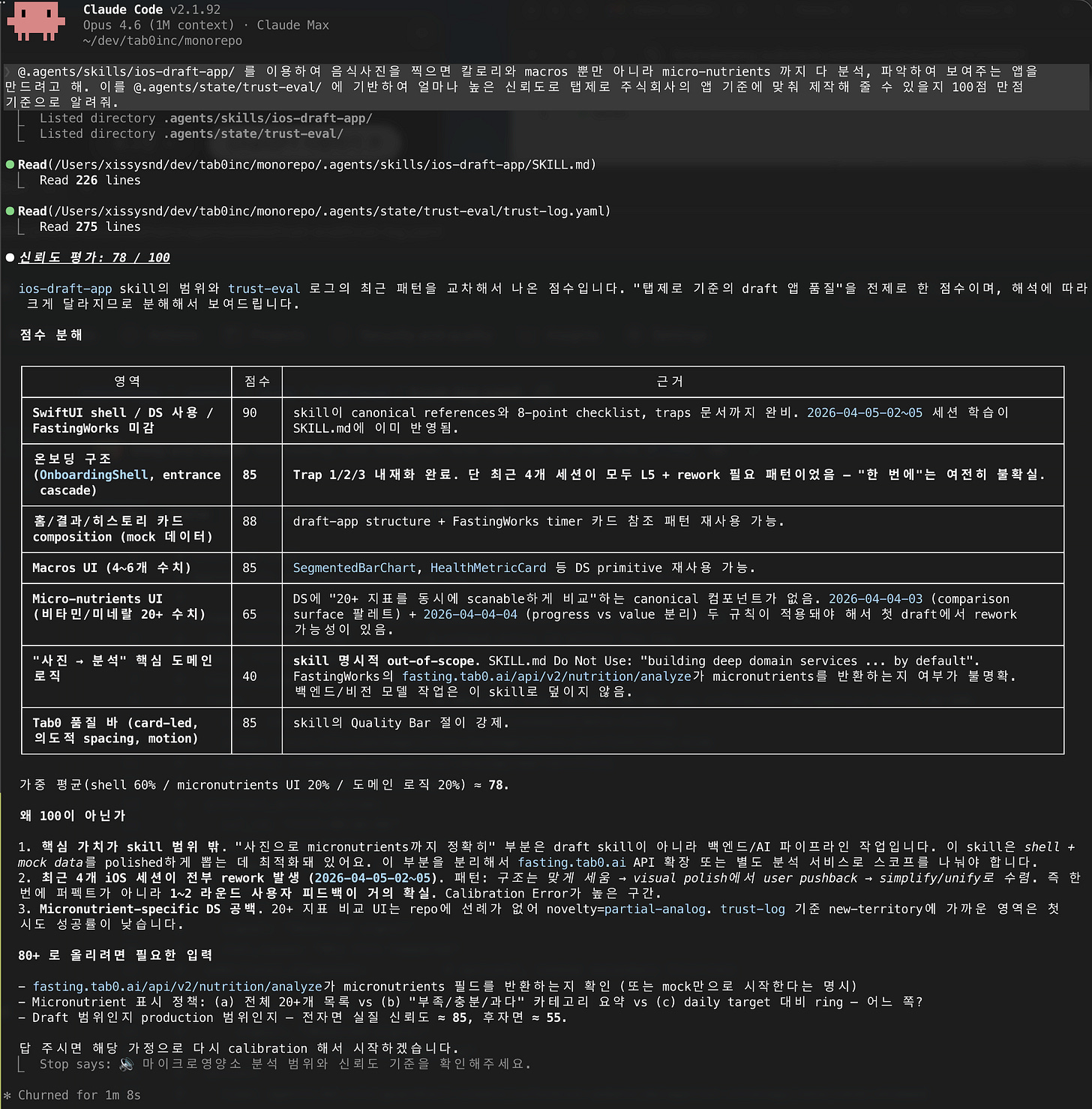

I configured a "Trust-eval" skill that triggers when my AI agent creates a PR, ends a work session, or on request. This skill thoroughly reviews the session, identifies what interventions occurred and at what intensity, and logs them. By recording where I stopped things, why that point concerned me, and what corrections repeat, the AI agent learns not to work the same way next time.

When a PR merges without any intervention, it means the task was either easy enough or executed in a trustworthy manner, so those are logged too.

Through these trust evaluation records, when the AI agent plans large tasks, it first performs trust prediction — estimating the probability of delivering results in the form I want. Rather than relying on a model's general performance or generic internet search results, this increasingly reflects the standards that my company and I value, making this trust score check enormously helpful for gauging work progress.

There are already many good AI agent frameworks and skills out there. But the reason I still build my own system is that everyone wants different things from AI and values different things. You need to steadily build value through compound engineering, but methods others swear by may not work for you, and approaches that worked for you may not work for other teams.

Tasks with a single right answer are already solved. What's needed is training your AI collaboration to match your desired outcomes using judgment criteria that don't exist on the internet — your tastes, your standards. This can become each person's own value function.

Applying the skills of trust, intervention, and delegation learned from working with people in organizations to AI agents — I can only marvel at how powerfully it accelerates things on multiple fronts.

Thank you.