This piece warns how a new polling method called "silicon sampling," which leverages artificial intelligence, is distorting public opinion and threatening the information ecosystem. It examines the limitations of traditional polling while explaining in detail the problems with silicon sampling and the serious social consequences it could produce. Beyond simply collecting public opinion, the essay emphasizes the danger of AI-generated synthetic opinions being accepted as if they were real, and calls for urgent awareness.

1. The Rise of Manufactured Opinion: The Shocking Truth About Silicon Sampling 🤯

In March 2026, a shocking revelation emerged: a set of "survey findings" on maternal health policy published in an Axios article turned out to be a computer simulation conducted by Aaru, an AI startup — carried out through a method called silicon sampling, with no real people's opinions involved at all.

"As a click-through revealed (and as an editor's note and clarification from Axios later made clear), the poll was a computer simulation conducted by Aaru, an AI startup. No humans were involved in generating the opinions."

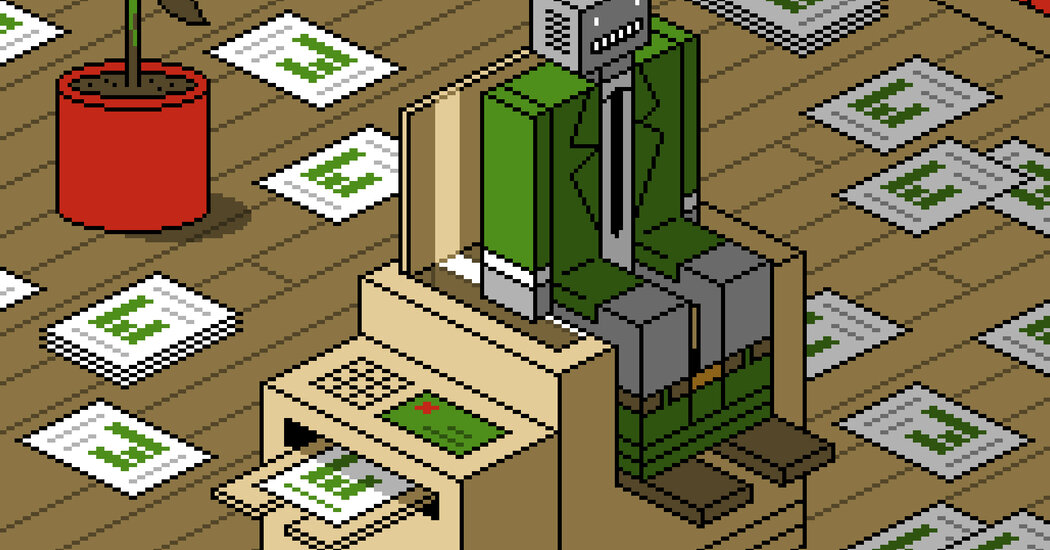

Silicon sampling exploits the ability of large language models (LLMs) to generate responses that mimic human answers, allowing polling firms to simulate surveys at a fraction of the cost and time of traditional methods. As telephone polling becomes harder and web polling grows more uncertain, silicon sampling is appealing because it eliminates the cumbersome and expensive process of actually asking people.

2. Silicon Sampling Undermines the Very Purpose of Polling 😟

But this approach carries a grave problem: it can undermine the fundamental purpose of polling itself. Public opinion plays a crucial role in guiding policy, politics, and social science — and it only has value when it summarizes the actual beliefs and views of real people. Using simulated human opinion in place of real opinion, the authors argue, only makes our already broken information ecosystem worse and fosters broader social distrust. They stress that we must not rely on an artificial society to understand the real one.

"But this undermines the foundational idea of polling. Public opinion is used to guide policy, politics and social science, and it has value only when it summarizes the actual beliefs and opinions of real humans. Using simulations of human opinion in place of the real thing will only worsen our broken information ecosystem and sow distrust. We should not rely on an artificial society to understand a real one."

In his 1922 book Public Opinion, journalist Walter Lippmann wrote that people form "pictures in their heads" about society and that democracy needs tools to correct those pictures — tools that polling can provide. He believed surveys, even if imperfect, are essential for accurately capturing the public will.

3. The Limits of Traditional Polling and the Biases Built Into Models 📊

Of course, traditional polling is not without flaws. Reducing margins of error requires large, representative samples, yet reaching busy people today is no easy task. Polling firms therefore rely on statistical models to adjust for variables that can skew results.

For example, if a poll on a particular policy finds that 80 percent of respondents are Republican and 20 percent are Democratic, a polling firm might judge the true split to be closer to 50-50 and reweight accordingly. This means the polling numbers we see are ultimately the output of a model, not raw survey data.

The problem is that every model carries its own inherent biases. In 2016, Nate Cohn, the New York Times' chief political analyst, gave the same election polling data to five different polling firms. The resulting estimates diverged by 5 percentage points — far larger than the margin of error from random sampling alone — suggesting that modeling assumptions themselves can dramatically distort outcomes. This means polling firms, through their modeling choices, can do more than report what the public thinks; they can shape public opinion itself.

4. Silicon Sampling Makes All of These Problems Worse 😨

Silicon sampling amplifies these existing problems. Proponents argue that sophisticated predictive computer simulations, trained on historical data, can accurately simulate human behavior and forecast the future. But the whole point of polling is to capture what people think right now, not to predict it.

The authors consider these methods absurd and note that considerable evidence shows they do not produce reliable results. A recent study — not yet peer-reviewed — found that the biases that distort conventional polls appear far more strongly in silicon sampling figures. The further you move from actual humans, the more likely a simulation is to become a mirror reflecting the pollster's own beliefs.

"This might sound far-fetched. We certainly think so. What's more, there is plenty of evidence that this method doesn't produce especially reliable results. A recent study (not yet peer reviewed) suggests that the biases that distort polling numbers distort silicon sampling numbers much more strongly. The further you get from humans, the more the simulation becomes a mirror reflecting the views of the pollster."

Despite this, AI modelers continue to push silicon sampling forward, and enormous sums of money are flowing in. Ipsos has partnered with Stanford University to pioneer the use of AI and synthetic data in market and opinion research through "digital twins" — virtual representations of real survey respondents. Gallup has partnered with silicon sampler Simile to create 1,000 AI-generated digital twins for clients, and CVS has also partnered with the startup to "answer questions about its customers."

5. The Proliferating Silicon Sampling Industry and Its Social Consequences 💸

Companies offering these services are multiplying rapidly, drawing hundreds of millions of dollars from major Silicon Valley investors. They promise "reliable proxies for human behavior" and pitch themselves enticingly to anyone who wants to know what people think before acting. Market research is expected to be the field where silicon sampling spreads most widely, since it can dramatically cut the upfront costs of launching a business.

Yet the authors warn that if silicon sampling is not stopped, trust in polling and social science research more broadly could be severely damaged. Like Aaru's fully simulated poll, these findings risk being nothing more than vague opinions dressed up as objective fact. Saying "most people trust their doctors and nurses" based on an AI survey is simply a false statement.

Just as Aaru's pre-election simulation the day before the 2024 U.S. presidential election predicted a narrow Kamala Harris victory, we face a situation where pure fiction is at risk of being treated as scientific and political knowledge. If we cannot reverse this trend, the authors worry that even our understanding of society itself may become artificial. 😔

Conclusion

In the end, silicon sampling may look like an efficient and cost-effective approach to polling on the surface, but in reality it is a dangerous technology that distorts the very purpose of public opinion research and risks serious disruption to the information ecosystem. If AI-generated synthetic opinions rather than real human views come to drive society's most important decisions and shared perceptions, we will inevitably drift further from genuine democracy and social consensus. Now is the time to think carefully and proceed with great caution when it comes to the development of these technologies. 💡