The body usually gets worse quietly. Bedtime slips later without notice, workouts get skipped, fast food starts filling the gaps, and then one day you suddenly think, "Why does my body feel so heavy these days?"

I used to dismiss that as just a temporary condition issue. I assumed I was simply busy and that a few days would fix it. But after gaining weight and going through burnout, I realized I could not keep living like that. Vague resolutions like "I should be healthier" were not enough.

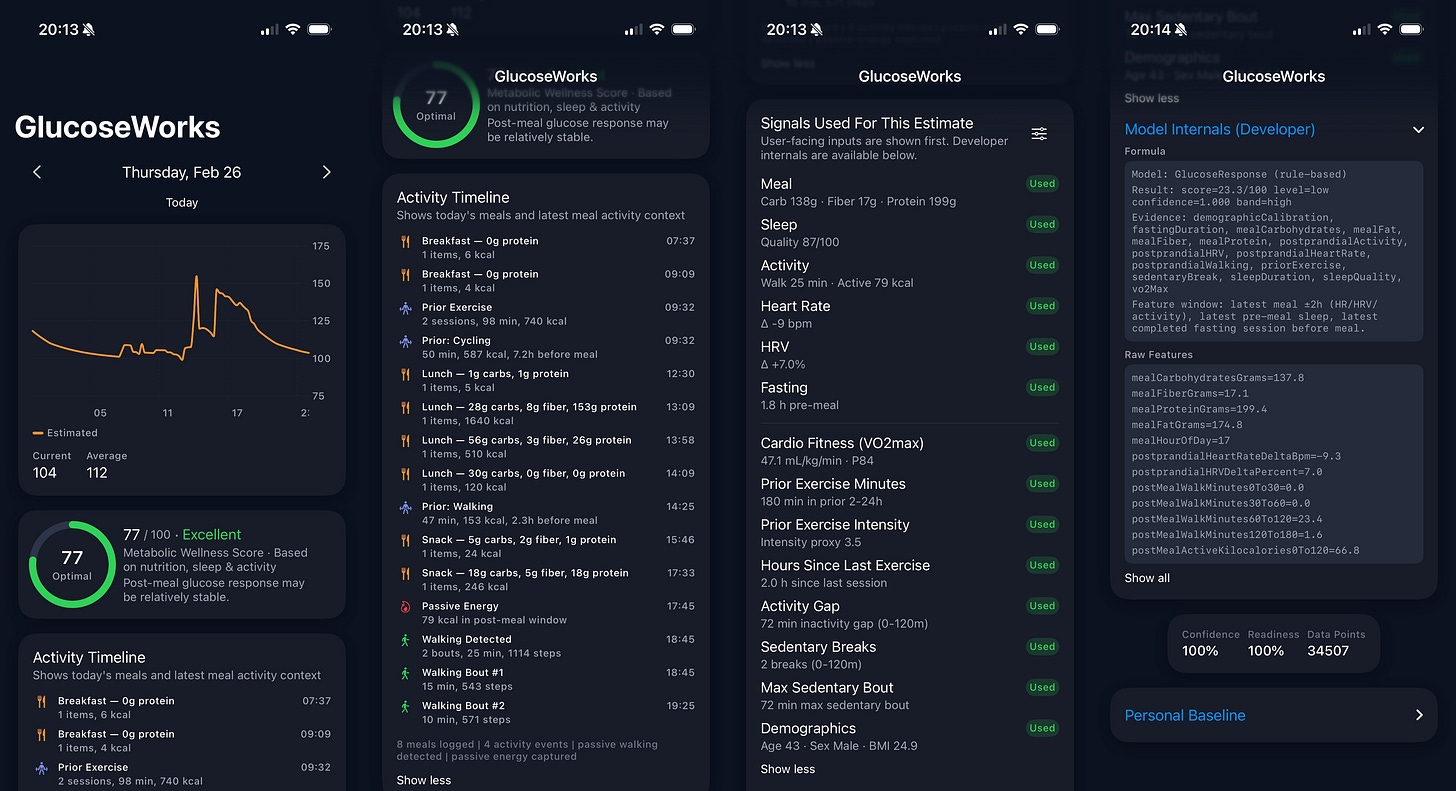

Ever since I bought an Apple Watch in 2021, a lot changed. I could finally see signals from my body as numbers and graphs: sleep, heart rate, activity, heart-rate variability, and more. I started noticing how moving bedtime earlier changed my recovery the next day, how overly intense workouts made work harder, and how better food improved my condition. Once I started seeing those patterns, my attitude toward my body changed too. Instead of surviving on instinct, I began forming small hypotheses, changing inputs, and observing what led to what.

"Why was I running experiments with data for work, but managing my own body purely by feel?"

In startups, we look at metrics, form hypotheses, run experiments, inspect results, and move on to the next step. It felt strange to realize that I had almost never applied that same process to my own body.

1. Trying a CGM

An Apple Watch already gave me a lot, but over time I wanted more direct visibility into what was happening in my body. That urge became especially strong when my habits began slipping again. When sleep got worse, exercise decreased, and nutrition fell apart, I wanted a more direct window into what was happening internally. That is when I wore a CGM, a continuous glucose monitor.

For me, a CGM was not something to wear all the time. It was more like a recalibration tool I would use once every few months whenever my lifestyle started drifting. The same food produced different responses depending on whether I had slept well, whether I had exercised the day before, and whether my general balance was off. With a CGM, those differences became much clearer. Whenever I felt my life losing balance, I would put one on and use it to steer my body back into a better cycle.

The problem was cost. Without diabetes, there is no insurance support, and the full expense comes out of pocket. It was useful as an occasional check, but what I really wanted was not a once-in-a-while snapshot. I wanted to read the direction my body was heading much more frequently.

That led me to a natural question:

"Apple Watch is already tracking heart rate, HRV, sleep, and activity all day. If I add nutrition data on top of that, could I build a model that produces a useful metabolic estimate even without a CGM?"

Signals were already flowing from my wrist continuously. If I added information about what and when I ate, maybe I could read my metabolic state to a meaningful degree without having to keep buying and reattaching an expensive CGM.

2. The Papers Suggested It Was Not a Fantasy

At first this was only a vague idea, but the more papers I read, the more interesting it became. A number of studies were already showing that when you combine wearable signals with meal data, you can explain post-meal responses and glucose patterns more meaningfully than I had assumed. Some papers used familiar signals like heart rate, activity, and sleep. Others expanded to skin temperature and optical signals. The body leaves traces, and if you connect those traces well enough, you can read metabolism to some degree.

Meals mattered more than I expected. Sleep had a meaningful impact on next-day post-meal response. I started looking at causal and correlational relationships across papers, weighting them based on the quality of the evidence and how often the findings were supported. I tried to determine which signals deserved more trust and which ones should be treated more cautiously.

Over time, the structure became clearer. In meals, carbohydrate and sugar intake, along with meal order, mattered a lot. On the wearable side, heart rate, HRV, sleep, and activity repeatedly appeared as meaningful signals. Walking right after a meal mattered. So did the residual effect of the previous day's exercise. And on days with poor sleep, the same food often hit the body much harder.

3. Building My Own Estimation Model

The next step was simple in spirit: based on those paper-backed relationships, I wanted to test whether combining wrist-based signals with my meal logs could tell me which direction my body was moving right now. You could call it machine learning or a paper-based predictive model, but what mattered to me was much simpler:

"Does this become a tool that is actually useful in my life?"

I did not begin by writing code immediately. I first used an LLM to turn the model's behavior into a concrete spec. Which signals should go in? Which ones should matter more? Which ones should only be supporting clues? I forced those choices to be grounded in papers rather than intuition. When I compared the early model's output against finger-prick measurements and the trends I had seen while wearing a CGM, there were stretches where the directions lined up remarkably well.

The exact glucose value does not have to match perfectly. What mattered more was that the trend aligned. If I know what I ate, how much and how I moved, how I slept the night before, and what my HRV says about stress response, then it is not that strange to say I can estimate my current metabolic state. After all, without those signals we are forced to puncture the skin, wear a CGM, or chase non-invasive biomarker technologies just to measure blood glucose directly.

When I evaluated and calibrated the model against five years of my Apple Health data, the patterns became even clearer. Certain foods consistently pushed my post-meal glucose a certain way. If my glucose stayed inexplicably elevated for a couple of days, I was more likely to get sick afterward. On poor-sleep days, the same meal was more likely to spike glucose or delay recovery.

That was exactly what I wanted: a way to detect more quickly when my body was reacting differently than usual.

4. Garbage In, Garbage Out. What-If and Intervention

In the beginning, the moments where the model was wrong were actually more instructive than the moments where it was right. Coffee was a good example. It is a low-carb event, but if the model looked mainly at heart rate and HRV, it sometimes interpreted the signal as if it were a post-meal metabolic response. To a human looking at the chart, the interpretation felt awkward, but the model was over-reading motion in physiological signals.

Overlapping meals created another issue. On days when lunch was followed by a snack and then an early dinner, the initial model tended to add those responses too mechanically. Scores would shoot up and stay elevated longer than they should. Reality did not seem to behave that simply, so I kept revisiting that assumption.

What helped most at this stage was grounding every change in the literature. Instead of trying to fit patterns purely from my limited personal data, I used already refined hypotheses from papers as the framework for improvement. That was the point where I became more convinced that AI agents, after coding, may go on to deliver major breakthroughs in science and research.

Once the model became reasonably stable, it opened the door to what-if experimentation. Why is my body reacting differently today? What if I had gone to sleep two hours earlier? What if I had eaten a salad instead of jajangmyeon at lunch? What if I had done Zone 2 instead of interval training in the morning? Once those scenarios accumulate, they naturally connect to intervention and next-action suggestions.

5. But This Must Not Be Used Like a Medical Device

This is where the line has to be clear. Even if the model is grounded in papers, it is still a personalized tool calibrated and tested for me. It should not be used like a medical device. If someone already has diabetes or is far outside the normal glucose range, the errors could grow much larger, and misleading signals could become dangerous. It should never be treated as a substitute for direct measurements such as finger-prick testing or CGM.

That does not make the attempt meaningless. What mattered to me was not diagnosis, but direction. How does post-meal response change after a few bad nights of sleep? Why does the whole day feel heavier when I stop exercising? What kinds of meals keep my condition steady? For questions like those, the model was genuinely useful.

6. This Is a Fascinating Moment

What I felt most strongly while building this model and app is that we are living through a genuinely interesting moment. In the past, understanding the body usually meant going to a hospital, wearing expensive devices, or depending heavily on experts. Now, a lot of signal already comes through the wrist, and the papers, data, and AI/ML tools that help interpret those signals are growing quickly too.

What makes that combination exciting is not just glucose estimation. It is that things once perceived only vaguely, like recovery, readiness for exercise, stress response, and post-meal flow, are slowly becoming legible signals. I think the future will bring not just glucose, but more biomarkers and far better personalized models. More people will be able to read and adjust their bodies naturally and routinely.

Apple is already doing blood-pressure alerts using Apple Watch and AI models. In the United States the feature launched after FDA approval last year, and in Korea it began service this February after regulatory approval. The mere fact that we have entered an era where I can know and prepare for my body more often, at lower cost, and in a more understandable way is, to me, genuinely exciting.